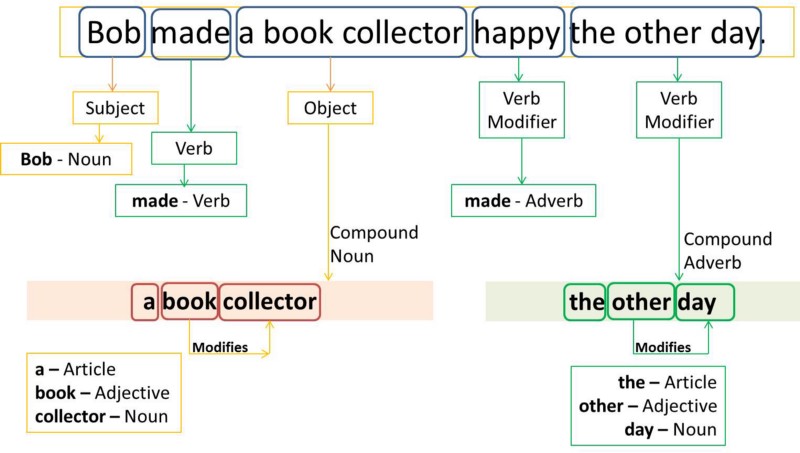

This is not rare-in natural languages (as opposed to many artificial languages), a large percentage of word-forms are ambiguous. Part-of-speech tagging is harder than just having a list of words and their parts of speech, because some words can represent more than one part of speech at different times, and because some parts of speech are complex or unspoken. Brill's tagger, one of the first and most widely used English POS-taggers, employs rule-based algorithms. POS-tagging algorithms fall into two distinctive groups: rule-based and stochastic. Once performed by hand, POS tagging is now done in the context of computational linguistics, using algorithms which associate discrete terms, as well as hidden parts of speech, by a set of descriptive tags. In corpus linguistics, part-of-speech tagging ( POS tagging or PoS tagging or POST), also called grammatical tagging is the process of marking up a word in a text (corpus) as corresponding to a particular part of speech, based on both its definition and its context.Ī simplified form of this is commonly taught to school-age children, in the identification of words as nouns, verbs, adjectives, adverbs, etc.

Then, add state for emission probabilities (one state per tag, using for loop). First instantiate the HiddenMarkovModel (imported from pomegranate library).

#PART OF SPEECH TAGGER CODE#

The snippet code below create the basic HMM tagger. The total probabilities for each row of the transition probabilities table will add up to 1. Thus, the transition probabilities from to N is 3/4 and 1/4 for to M. For example, refer to the first row of the transition probabilities table, for tag N at starting state (), there are total of 3 occurrence, and tag M appears 1 at the starting state. Transition probabilities on the other hand, giving the conditional probability of moving between tags during the sequence. For each tag (N, M, V), their total emission probabilities will add up to 1 respectively. Example in below figure (left) illustrate how the emission probabilities are calculated based on a simple corpus listed at the most right of the figure (four sentences with POS-tagging). The HMM tagger has one hidden state for each possible tag, and parameterised by two distributions: the emission probabilities and transition probabilities.Įmission probabilities giving the conditional probability of observing a given word from each hidden state. MFC always tag word with its most frequent tag, regardless of context and the positioning of the word (first will is after a Noun, second will is after a Verb). This simple tagger will fail to accurately tag a word that has more than one tag. Here is a simplified example to brief the process mentioned above. The mfc_table will be used to create a MFC tagger model and it is able to predict the tag of words from both training dataset and testing dataset with accuracy of 95.7% and 93% respectively. # to get the tag with max value (most frequent class label) # if more than 1 tag, use the function 'get_maxval_key' defined above Next, with the results from the word_counts, create a lookup table, mfc_table where mfc_table contains the tag label most frequently assigned to that word.

Word_counts = pair_counts(aining_set.X, aining_set.Y) # using pair_counts() to calculate the frequency of each tag being assigned That counts the number of occurrences of the corresponding value from theįor tag, word in zip(sequences_A, sequences_B): """Return a dictionary keyed to each unique value in the first sequence list

def pair_counts(sequences_A, sequences_B): This 'Most Frequent Class' (MFC) tagger inspects each observed word in the sequence and assigns it the label that was most often assigned to that word in the corpus.įirst, we will need to computes the frequency of each tag being assigned to each word. It simply choose the tag most frequently assigned to each word. Prior to build the HMM POS tagger, baseline model, a simper tagger was build to evaluate the tagger performance. Baseline Model: Most Frequent Class tagger